Dimensional Modeling in Data Warehousing: The Design Pattern That Makes Analytics Intuitive

There’s a profound difference between databases that store data and databases that enable insight.

Operational databases—the ones running your e-commerce site, managing your inventory, processing transactions—are optimized for one purpose: reliably recording what happens. Customer places order, record it. Payment processes, record it. Inventory moves, record it. These systems excel at capturing and updating current state.

But ask these databases analytical questions and they struggle. “What were our top-selling product categories by region last quarter, and how did that compare to the same quarter last year?” This simple business question requires joining a dozen normalized tables, aggregating millions of rows, and navigating complex relationships that make perfect sense for transactional integrity but create nightmares for analysis.

Data warehouses exist to solve this problem—to organize data specifically for analytics rather than operations. But how you organize that data fundamentally determines whether analysts can extract insights efficiently or spend most of their time wrestling with query complexity.

Dimensional modeling is the design approach that makes data warehouses analytically powerful. It reorganizes data around business processes and measurements, creating structures that align naturally with how humans think about business questions rather than how databases enforce transactional rules.

For students, data engineers, and BI developers in Pakistan building warehouses that power business decisions, dimensional modeling isn’t just another database design technique—it’s the foundational pattern that determines whether your warehouse becomes an analytical asset or an expensive exercise in frustration.

At Dicecamp, we teach dimensional modeling not as abstract methodology but as the practical design discipline that makes warehouses actually useful for the people who need to extract insights from them.

The Problem Traditional Database Design Creates

To appreciate dimensional modeling, first understand why traditional database design fails for analytics.

Normalized databases—the standard approach for operational systems—minimize data redundancy through careful decomposition. Customer information lives in one table. Orders in another. Order line items in a third. Products in a fourth. Categories, suppliers, warehouses, shipping methods—each concept gets its own table connected through foreign key relationships.

This normalization delivers enormous benefits for transactional systems: data updates in one place, referential integrity is enforceable, storage is efficient, and write operations are fast.

But it creates analytical challenges:

Query complexity explodes. That simple “top products by region” question requires joining order_items to orders to customers to products to categories to regions—six tables minimum, likely more for complete analysis. Each additional analytical dimension adds more joins.

Performance suffers. Joins are computationally expensive. Joining millions of fact records across a dozen dimension tables, even with good indexes, takes time. Analysts wait. Business questions go unanswered because queries time out.

Intuition fails. Business users don’t think in normalized table structures. They think in business terms: “sales by product category by customer segment by time period.” Translating this natural business thinking into complex multi-table joins requires SQL expertise most business analysts don’t possess.

Dimensional modeling solves these problems by organizing data around how business questions are actually asked, not around how normalized databases store data efficiently.

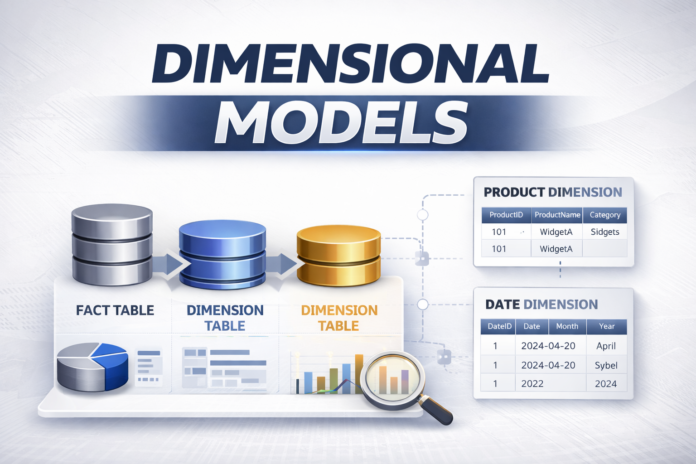

The Core Concept: Facts and Dimensions

Dimensional modeling reorganizes warehouse data into two fundamental components that align with how humans naturally think about business metrics.

Facts are measurements—the numbers you want to analyze. Sales revenue. Units sold. Account balance. Website visits. Service calls. Production output. Anything quantifiable that represents business performance.

Facts answer “how much?” or “how many?” They’re the dependent variables in analysis—the outcomes you’re trying to understand, predict, or optimize.

Dimensions are the contexts surrounding those measurements—the who, what, when, where, and why that make facts meaningful. Which customer? Which product? When did it happen? Where was it shipped? Through which channel?

Dimensions answer the analytical questions that provide context: “Sales were strong” is meaningless. “Sales in Lahore for electronics products through the mobile app during December increased 40% year-over-year” is actionable insight. The dimensions—geography, product category, channel, time—transform raw measurements into business intelligence.

This fact/dimension separation mirrors natural analytical thinking. Business questions consistently follow the pattern: “Show me [measurement] by [dimension] for [dimensional constraints].” “Show me revenue by product category for Q4 2024.” “Show me customer count by acquisition channel for the Karachi region.”

Dimensional modeling structures warehouses to answer these questions efficiently.

Fact Tables: Where Measurements Live

A fact table stores the quantitative measurements at the center of your analysis—typically one row per business event or transaction.

In a retail warehouse, the fact table might record sales transactions:

Sales_Fact

----------

date_key (foreign key → Date dimension)

product_key (foreign key → Product dimension)

customer_key (foreign key → Customer dimension)

store_key (foreign key → Store dimension)

quantity_sold (measurement)

revenue_amount (measurement)

cost_amount (measurement)

profit_amount (measurement)

Notice the structure: foreign keys connecting to dimension tables, plus measurements (the actual numbers being analyzed).

Each row represents a grain—the atomic level of detail being captured. In this case, one row per product sold to a customer on a specific date at a specific store. This grain choice is critical—too detailed and the fact table becomes unwieldy; too aggregated and you lose analytical flexibility.

Fact tables grow large because they record business events. Millions of sales transactions. Billions of web clicks. Hundreds of millions of call detail records. This is where warehouse storage accumulates, and why fact table design matters for performance.

Most measurements in fact tables are additive—they can be summed meaningfully across any dimension. Total revenue, total quantity, total cost—sum them by any dimension combination and the result makes business sense.

Some measurements are semi-additive—they can be summed across some dimensions but not others. Account balance can be summed across customers but not across time (summing daily balances produces meaningless numbers).

A few are non-additive—they can’t be summed at all. Percentages, ratios, unit prices. These require aggregation functions other than SUM.

Understanding these distinctions shapes how you design fact tables and write analytical queries.

Dimension Tables: Where Context Lives

Dimension tables provide the descriptive attributes that make fact measurements meaningful—the “by” part of “analyze revenue by product category.”

A product dimension might contain:

Product_Dimension

-----------------

product_key (surrogate key)

product_id (natural business key)

product_name

product_description

category

subcategory

brand

supplier_name

package_size

launch_date

Notice the richness compared to fact tables. Dimensions carry all the descriptive information—names, categories, attributes, hierarchies—that enable filtering, grouping, and contextualization of facts.

Dimensions are relatively small compared to fact tables. You might have millions of sales transactions but only thousands of products, hundreds of stores, or dozens of sales regions. This size difference is why dimensional modeling performs well—small dimension tables join efficiently to large fact tables.

Dimension attributes enable filtering: “Show me sales for products in the Electronics category.” And grouping: “Group sales by product brand.” And hierarchical analysis: “Show sales by category, drilling down to subcategory and individual product.”

Well-designed dimensions include:

Natural business keys that users recognize (product codes, customer IDs, account numbers). Surrogate keys generated by the warehouse for technical purposes (handling SCDs, ensuring uniqueness). Descriptive attributes that support filtering and grouping. Hierarchies that enable drill-down analysis (Category → Subcategory → Product).

The richer your dimensions, the more analytical flexibility your warehouse provides. But richness requires thoughtful attribute selection—include what supports analysis, exclude what creates noise.

Star Schema: The Dimensional Modeling Classic

The star schema is dimensional modeling’s signature design pattern—one central fact table surrounded by dimension tables, each connected through foreign keys. Visually, it resembles a star, hence the name.

Customer_Dim

|

|

Date_Dim --- Sales_Fact --- Product_Dim

|

|

Store_Dim

This structure directly supports natural analytical queries:

“Sales by product category” joins Sales_Fact to Product_Dim on product_key, groups by category, sums revenue. Two tables, simple join, fast execution.

“Customer purchases over time” joins Sales_Fact to Customer_Dim and Date_Dim, groups by customer and time period. Three tables, straightforward logic.

The simplicity is deliberate. Star schemas optimize for:

Query performance—minimal joins mean fast execution. Business user comprehension—the structure mirrors how business questions are asked. BI tool compatibility—most BI tools are optimized for star schema patterns. Maintenance simplicity—fewer tables with clear purposes mean easier warehouse management.

Star schemas are denormalized by design. Product dimensions include category and subcategory as attributes rather than normalizing them into separate tables. This denormalization trades storage efficiency for query performance—exactly the right trade-off for analytical workloads where read performance matters far more than storage cost.

Snowflake Schema: When Normalization Makes Sense

The snowflake schema normalizes dimension tables into multiple related tables, creating a more complex structure:

Customer_Dim

|

|

Date_Dim --- Sales_Fact --- Product_Dim --- Category_Dim

| |

| |

Store_Dim Brand_Dim

|

|

Region_Dim

Product_Dim now references separate Category and Brand tables rather than storing those as attributes. Store_Dim references a separate Region table.

This normalization reduces redundancy—category names store once in Category_Dim rather than repeated in every product record. It enforces consistency—all products in a category reference the same category record.

But it costs query performance. Simple “sales by category” now requires joining three tables instead of two. Each additional normalization layer adds another join.

Snowflake schemas suit specific scenarios:

Very large dimensions where denormalization would create massive redundancy. Strict data governance requirements where attribute consistency through normalization is critical. Complex hierarchies that change independently and benefit from separate management.

In practice, star schemas dominate business intelligence because query simplicity and performance outweigh the benefits of normalization for most analytical use cases. Use snowflaking selectively, only where it solves a specific problem that denormalization creates.

Dimensional Modeling in the Real World

How do these patterns appear in actual business contexts across Pakistan’s economy?

Retail and e-commerce: Sales fact tables recording transactions, joined to customer, product, store, promotion, and date dimensions. Analysis by product category, customer segment, geographic region, and time period for merchandising, pricing, and marketing decisions.

Banking: Account balance facts, transaction facts, loan origination facts—each with dimensions for customer, product type, branch, channel, and time. Risk analysis, profitability assessment, and regulatory reporting all depend on dimensional models.

Telecommunications: Call detail record facts with subscriber, cell tower location, time, and service type dimensions. Network optimization, capacity planning, and customer behavior analysis flow from dimensional analysis.

Healthcare: Patient encounter facts with patient, provider, procedure, diagnosis, and facility dimensions. Outcome analysis, resource utilization, and cost management rely on dimensional queries.

Manufacturing: Production facts with product, machine, shift, operator, and facility dimensions. Quality control, efficiency analysis, and capacity planning use dimensional models.

These aren’t toy examples—they’re production warehouses processing billions of fact records and supporting critical business decisions daily.

Common Dimensional Modeling Mistakes

Even experienced data warehouse developers make design mistakes that undermine warehouse value.

Choosing the wrong grain creates analysis problems. Grain too detailed produces massive fact tables that perform poorly. Grain too aggregated prevents detailed analysis. Choose grain carefully based on actual business questions, not assumptions.

Mixing grains in a single fact table creates confusion and errors. Don’t combine daily summary facts with transaction-level facts in one table. Separate fact tables with clear, consistent grain avoid this problem.

Dimension bloat happens when unnecessary attributes get added “just in case.” Every dimension attribute carries storage and ETL cost. Include attributes that support actual analysis, question others.

Fact/dimension confusion occurs when designers put dimensional attributes in fact tables or measurements in dimensions. Customer name belongs in customer dimension, not fact table. Revenue belongs in facts, not product dimension. Maintain clean separation.

Ignoring slowly changing dimensions makes historical analysis unreliable when dimension attributes change over time. Implement appropriate SCD techniques for time-variant attributes.

Forgetting hierarchy design in dimensions limits drill-down analysis. Plan hierarchies explicitly—category to subcategory to product, year to quarter to month to day.

Prevention comes through understanding dimensional modeling principles deeply and validating designs against actual analytical requirements before implementation.

Why Dimensional Modeling Mastery Matters

Pakistan’s organizations increasingly compete on data-driven decision making. The warehouses supporting that intelligence require design that makes analysis efficient and insights accessible.

Data Engineers and BI Developers who understand dimensional modeling design warehouses that actually get used, versus technically correct databases that analysts struggle with. This practical effectiveness drives career value.

Salary premiums for dimensional modeling expertise are substantial—40-60% higher than basic database skills because the impact on business intelligence effectiveness is direct and measurable.

Beyond immediate career benefits, dimensional modeling teaches design thinking that transfers across data contexts: understanding how structure enables or impedes analysis, balancing theoretical purity against practical performance, and designing for user needs rather than technical elegance.

Explore Dicecamp – Start Your Data Engineering Journey Today

Explore Dicecamp – Start Your Data Engineering Journey Today

Whether you’re a student, working professional, or career switcher in Pakistan, Dicecamp provides structured learning paths to help you master Data Engineering Infrastructure with real-world skills.

Choose the learning option that fits you best:

Data Engineer Paid Course (Complete Professional Program)

Data Engineer Paid Course (Complete Professional Program)

A full, in-depth DevOps training program covering Virtualization, Linux, Cloud, CI/CD, Docker, Kubernetes, and real projects. Ideal for serious learners aiming for jobs and freelancing.

Data Engineer Free Course (Beginner Friendly)

Data Engineer Free Course (Beginner Friendly)

New to DevOps or IT infrastructure? Start with our free course and build your foundation in Linux, Virtualization, and DevOps concepts.

Your Next Step

Data warehouses exist to enable insight. Structure determines whether that insight emerges easily or remains buried under query complexity.

Dimensional modeling is the design discipline that creates analytically powerful warehouses—structures aligned with business thinking, optimized for analytical performance, and accessible to the people who need to extract intelligence.

Whether you’re building your first warehouse or deepening existing data engineering skills, dimensional modeling mastery is the foundation that determines whether your warehouses become genuine business assets.

At Dicecamp, we’re ready to help you build that mastery through hands-on warehouse design that emphasizes practical dimensional modeling for real analytical requirements.

Master dimensional modeling with Dicecamp and design data warehouses that turn data into insight.

📲 Message Dice Analytics on WhatsApp for more information:

https://wa.me/923405199640

Common Questions About Dimensional Modeling

When should I use star schema versus snowflake schema?

Default to star schema for most business intelligence scenarios—the simplicity and performance advantages outweigh normalization benefits. Consider snowflaking only for very large dimensions where denormalization creates significant redundancy, or when data governance requires strict attribute consistency through normalization. If uncertain, star schema is the safer choice.

How detailed should my fact table grain be?

Choose grain based on the most detailed analysis business users actually need. If they analyze individual transactions, use transaction-level grain. If daily summaries suffice, aggregate to daily grain. Going more detailed than needed creates performance problems; going less detailed than needed prevents answering legitimate business questions. Interview actual users about their analytical needs.

Can I have multiple fact tables in one dimensional model?

Absolutely. Most warehouses have multiple fact tables representing different business processes—sales facts, inventory facts, customer service facts—each potentially sharing common dimensions like customer, product, and date. This creates a constellation schema where multiple stars share dimension tables, enabling cross-process analysis.

How do I handle many-to-many relationships in dimensional models?

Create a factless fact table (also called a bridge table) that records the relationship itself. For example, students enrolled in courses creates a many-to-many relationship. The fact table records enrollment events with foreign keys to student and course dimensions, potentially with enrollment date and status as attributes. This converts the many-to-many relationship into dimensional model structures.